Integrations

BunkerWeb Cloud

BunkerWeb Cloud is a managed Web Application Firewall (WAF) and reverse proxy solution that allows you to secure your web applications without installing BunkerWeb in your infrastructure. By subscribing to BunkerWeb Cloud, you benefit from a complete BunkerWeb stack hosted in the cloud with dedicated resources (8GB RAM, 2 CPU per instance, replicated across 2 instances for high availability, Standard offering).

Key Benefits

Order your BunkerWeb Cloud instance and get access to:

- Instant Deployment: No installation required in your infrastructure

- High Availability: Replicated instances with automatic load balancing

- Integrated Monitoring: Access to Grafana for logs and metrics visualization

- Scalability: Dedicated resources adapted to heavy workloads

- Enhanced Security: Real-time WAF protection against web threats

If you are interested in the BunkerWeb Cloud offering, don't hesitate to contact us so we can discuss your needs.

Architecture Overview

Simple Architecture - Single Service

graph LR

A[Client] -->|HTTPS| B[example.com]

B -->|DNS Resolution| C[Load Balancer54984654.bunkerweb.cloud]

C -->|Traffic| D[BunkerWeb CloudWAF + Reverse Proxy]

D -->|HTTPS/HTTP| E[Server example.comClient Infrastructure]

style C fill:#e1f5fe,color:#222

style D fill:#f3e5f5,color:#222

style E fill:#e8f5e8,color:#222Complex Architecture - Multi-services

graph LR

A[Clients] -->|HTTPS| B[example.comother-example.coman-other-example.com]

B -->|DNS Resolution| C[Load Balancer54984654.bunkerweb.cloud]

C -->|Traffic| D[BunkerWeb CloudWAF + Reverse ProxySSL SNI Enabled]

D -->|HTTPS with SNI| E[Client GatewayReverse Proxy/LB]

E -->|Internal routing| F[Service 1]

E -->|Internal routing| G[Service 2]

E -->|Internal routing| H[Service N]

style C fill:#e1f5fe,color:#222

style D fill:#f3e5f5,color:#222

style E fill:#fff3e0,color:#222

style F fill:#e8f5e8,color:#222

style G fill:#e8f5e8,color:#222

style H fill:#e8f5e8,color:#222Initial Configuration

1. Management Interface Access

After subscribing to BunkerWeb Cloud, you will receive:

- BunkerWeb UI access URL: Interface for configuring your services

- Load Balancer endpoint: Unique URL in the format

http://[ID].bunkerweb.cloud - Grafana Access: Monitoring interface and metrics visualization

- Allocated Resources: 2 instances with 8GB RAM and 2 CPU each

2. First Connection

- Connect to the BunkerWeb Cloud interface

- Configure your services to protect

- Access Grafana to visualize your BunkerWeb logs and metrics

DNS Configuration

Traffic Redirection to BunkerWeb Cloud

For your domain traffic to be processed by BunkerWeb Cloud, you must configure your DNS records:

Required Configuration:

example.com. IN CNAME 54984654.bunkerweb.cloud.

www.example.com. IN CNAME 54984654.bunkerweb.cloud.

Important: Replace 54984654 with your load balancer identifier provided during subscription.

Configuration Validation

Verify DNS resolution:

dig example.com

nslookup example.com

The result should point to your BunkerWeb Cloud endpoint.

Service Configuration

Single Service

For a simple service hosted on your infrastructure:

Configuration in BunkerWeb UI:

- Server Name:

example.com - Use Reverse Proxy:

yes - Reverse Proxy Host:

185.87.1.100:443(your server IP)

You can find all configuration options in the Reverse Proxy Documentation

Multi-services with SNI

Why Enable SNI?

Server Name Indication (SNI) is essential when:

- Multiple domains point to the same backend infrastructure

- Your infrastructure hosts multiple services with distinct SSL certificates

- You use a reverse proxy/gateway on the client side

SNI Configuration

In BunkerWeb UI, for each service:

# Service 1

SERVICE_NAME: example-com

SERVER_NAME: example.com

REVERSE_PROXY_HOST: https://gateway.internal.domain.com

REVERSE_PROXY_PORT: 443

REVERSE_PROXY_SSL_SNI: yes

REVERSE_PROXY_SSL_SNI_NAME: example.com

# Service 2

SERVICE_NAME: other-example-com

SERVER_NAME: other-example.com

REVERSE_PROXY_HOST: https://gateway.internal.domain.com

REVERSE_PROXY_PORT: 443

REVERSE_PROXY_SSL_SNI: yes

REVERSE_PROXY_SSL_SNI_NAME: other-example.com

You can find all configuration options in the Reverse Proxy Documentation

SNI Technical Details

SNI allows BunkerWeb Cloud to:

- Identify the target service during TLS connection

- Transmit the correct domain name to the backend

- Allow the client gateway to select the right certificate

- Route correctly to the appropriate service

Without SNI enabled:

graph LR

A[Client] --> B[BunkerWeb]

B --> C["Gateway (default certificate)"]

C --> D[SSL Error]

style B fill:#f3e5f5,color:#222

style C fill:#fff3e0,color:#222

style D fill:#ff4d4d,color:#fff,stroke:#b30000,stroke-width:2pxWith SNI enabled:

graph LR

A[Client] --> B[BunkerWeb]

B --> C["Gateway (example.com specific certificate)"]

C --> D[Correct Service]

style B fill:#f3e5f5,color:#222

style C fill:#e8f5e8,color:#222

style D fill:#e8f5e8,color:#222SSL/TLS and SNI Management

SSL Certificates

BunkerWeb Cloud Side

BunkerWeb Cloud automatically manages:

- Let's Encrypt certificates for your domains

- Automatic renewal

- Optimized TLS configuration

Client Infrastructure Side

Important Recommendations:

- Use HTTPS for communication between BunkerWeb and your services

- Manage your own certificates on your infrastructure

- Properly configure SNI on your gateway/reverse proxy

Detailed SNI Configuration

Use Case: Infrastructure with Gateway

If your architecture looks like:

graph LR

A[BunkerWeb Cloud] --> B[Client Gateway]

B --> C[Service 1]

B --> D[Service 2]

B --> E[Service 3]

style A fill:#f3e5f5,color:#222

style B fill:#fff3e0,color:#222

style C fill:#e8f5e8,color:#222

style D fill:#e8f5e8,color:#222

style E fill:#e8f5e8,color:#222Required configuration on BunkerWeb side:

# Configuration for example.com

REVERSE_PROXY_SSL_SNI: yes

REVERSE_PROXY_SSL_SNI_NAME: example.com

REVERSE_PROXY_SSL_VERIFY: no # If self-signed certificate on client side

REVERSE_PROXY_HEADERS: Host $host

# Configuration for api.example.com

REVERSE_PROXY_SSL_SNI: yes

REVERSE_PROXY_SSL_SNI_NAME: api.example.com

REVERSE_PROXY_SSL_VERIFY: no

REVERSE_PROXY_HEADERS: Host $host

Client Gateway Configuration

Overview

When your architecture uses a gateway/reverse proxy on the client side to route traffic to multiple services, specific configuration is needed to support SNI and ensure secure communication with BunkerWeb Cloud.

Configurations by Technology

Nginx

Nginx Configuration

# Configuration to support SNI with multiple services

server {

listen 443 ssl http2;

server_name example.com;

ssl_certificate /path/to/example.com.crt;

ssl_certificate_key /path/to/example.com.key;

ssl_protocols TLSv1.2 TLSv1.3;

ssl_ciphers ECDHE-ECDSA-AES128-GCM-SHA256:ECDHE-RSA-AES128-GCM-SHA256;

ssl_prefer_server_ciphers off;

# Security headers

add_header X-Frame-Options DENY;

add_header X-Content-Type-Options nosniff;

add_header X-XSS-Protection "1; mode=block";

location / {

proxy_pass http://service1:8080;

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto $scheme;

proxy_set_header X-Forwarded-Host $host;

proxy_set_header X-Forwarded-Server $host;

# Timeouts

proxy_connect_timeout 60s;

proxy_send_timeout 60s;

proxy_read_timeout 60s;

}

}

server {

listen 443 ssl http2;

server_name api.example.com;

ssl_certificate /path/to/api.example.com.crt;

ssl_certificate_key /path/to/api.example.com.key;

ssl_protocols TLSv1.2 TLSv1.3;

ssl_ciphers ECDHE-ECDSA-AES128-GCM-SHA256:ECDHE-RSA-AES128-GCM-SHA256;

ssl_prefer_server_ciphers off;

location / {

proxy_pass http://api-service:3000;

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto $scheme;

# API-specific configuration

proxy_buffering off;

proxy_request_buffering off;

}

}

Traefik

Traefik Configuration

**With Docker Compose:**services:

traefik:

image: traefik:v3.0

command:

- --api.dashboard=true

- --providers.docker=true

- --providers.file.filename=/etc/traefik/dynamic.yml

- --entrypoints.websecure.address=:443

- --certificatesresolvers.myresolver.acme.tlschallenge=true

- --certificatesresolvers.myresolver.acme.email=admin@example.com

- --certificatesresolvers.myresolver.acme.storage=/letsencrypt/acme.json

ports:

- "443:443"

- "8080:8080"

volumes:

- /var/run/docker.sock:/var/run/docker.sock:ro

- ./letsencrypt:/letsencrypt

- ./dynamic.yml:/etc/traefik/dynamic.yml:ro

labels:

- "traefik.enable=true"

- "traefik.http.routers.dashboard.rule=Host(`traefik.example.com`)"

- "traefik.http.routers.dashboard.tls.certresolver=myresolver"

service1:

image: your-app:latest

labels:

- "traefik.enable=true"

- "traefik.http.routers.service1.rule=Host(`example.com`)"

- "traefik.http.routers.service1.entrypoints=websecure"

- "traefik.http.routers.service1.tls.certresolver=myresolver"

- "traefik.http.services.service1.loadbalancer.server.port=8080"

- "traefik.http.routers.service1.middlewares=security-headers,compress"

api-service:

image: your-api:latest

labels:

- "traefik.enable=true"

- "traefik.http.routers.api.rule=Host(`api.example.com`)"

- "traefik.http.routers.api.entrypoints=websecure"

- "traefik.http.routers.api.tls.certresolver=myresolver"

- "traefik.http.services.api.loadbalancer.server.port=3000"

- "traefik.http.routers.api.middlewares=security-headers,rate-limit,compress"

http:

middlewares:

security-headers:

headers:

frameDeny: true

contentTypeNosniff: true

browserXssFilter: true

forceSTSHeader: true

stsIncludeSubdomains: true

stsPreload: true

stsSeconds: 31536000

customRequestHeaders:

X-Forwarded-Proto: "https"

rate-limit:

rateLimit:

burst: 100

average: 50

compress:

compress: {}

routers:

service1:

rule: "Host(`example.com`)"

service: "service1"

tls:

certResolver: "myresolver"

middlewares:

- "security-headers"

- "compress"

api:

rule: "Host(`api.example.com`)"

service: "api-service"

tls:

certResolver: "myresolver"

middlewares:

- "security-headers"

- "rate-limit"

- "compress"

services:

service1:

loadBalancer:

servers:

- url: "http://service1:8080"

healthCheck:

path: "/health"

interval: "30s"

api-service:

loadBalancer:

servers:

- url: "http://api-service:3000"

healthCheck:

path: "/api/health"

interval: "30s"

Apache

Apache Configuration

# Apache configuration with SNI

<VirtualHost *:443>

ServerName example.com

DocumentRoot /var/www/html

# SSL configuration

SSLEngine on

SSLProtocol all -SSLv3 -TLSv1 -TLSv1.1

SSLCipherSuite ECDHE-ECDSA-AES128-GCM-SHA256:ECDHE-RSA-AES128-GCM-SHA256

SSLHonorCipherOrder off

SSLCertificateFile /path/to/example.com.crt

SSLCertificateKeyFile /path/to/example.com.key

# Security headers

Header always set X-Frame-Options DENY

Header always set X-Content-Type-Options nosniff

Header always set X-XSS-Protection "1; mode=block"

Header always set Strict-Transport-Security "max-age=31536000; includeSubDomains"

# Reverse proxy configuration

ProxyPass / http://service1:8080/

ProxyPassReverse / http://service1:8080/

ProxyPreserveHost On

# Custom headers

ProxyPassReverse / http://service1:8080/

ProxyPassReverseInterpolateEnv On

<Proxy *>

Require all granted

</Proxy>

# Logs

ErrorLog ${APACHE_LOG_DIR}/example.com_error.log

CustomLog ${APACHE_LOG_DIR}/example.com_access.log combined

</VirtualHost>

<VirtualHost *:443>

ServerName api.example.com

SSLEngine on

SSLProtocol all -SSLv3 -TLSv1 -TLSv1.1

SSLCipherSuite ECDHE-ECDSA-AES128-GCM-SHA256:ECDHE-RSA-AES128-GCM-SHA256

SSLCertificateFile /path/to/api.example.com.crt

SSLCertificateKeyFile /path/to/api.example.com.key

ProxyPass / http://api-service:3000/

ProxyPassReverse / http://api-service:3000/

ProxyPreserveHost On

# API-specific configuration

ProxyTimeout 300

ProxyBadHeader Ignore

ErrorLog ${APACHE_LOG_DIR}/api.example.com_error.log

CustomLog ${APACHE_LOG_DIR}/api.example.com_access.log combined

</VirtualHost>

# Required modules configuration

LoadModule ssl_module modules/mod_ssl.so

LoadModule proxy_module modules/mod_proxy.so

LoadModule proxy_http_module modules/mod_proxy_http.so

LoadModule headers_module modules/mod_headers.so

HAProxy

HAProxy Configuration

global

maxconn 4096

log stdout local0

chroot /var/lib/haproxy

stats socket /run/haproxy/admin.sock mode 660 level admin

stats timeout 30s

user haproxy

group haproxy

daemon

# SSL configuration

ssl-default-bind-ciphers ECDHE-ECDSA-AES128-GCM-SHA256:ECDHE-RSA-AES128-GCM-SHA256

ssl-default-bind-options ssl-min-ver TLSv1.2 no-tls-tickets

defaults

mode http

timeout connect 5000ms

timeout client 50000ms

timeout server 50000ms

option httplog

option dontlognull

option redispatch

retries 3

maxconn 2000

frontend https_frontend

bind *:443 ssl crt /etc/ssl/certs/example.com.pem crt /etc/ssl/certs/api.example.com.pem

# Security headers

http-response set-header X-Frame-Options DENY

http-response set-header X-Content-Type-Options nosniff

http-response set-header X-XSS-Protection "1; mode=block"

http-response set-header Strict-Transport-Security "max-age=31536000; includeSubDomains"

# SNI-based routing

acl is_example hdr(host) -i example.com

acl is_api hdr(host) -i api.example.com

use_backend service1_backend if is_example

use_backend api_backend if is_api

default_backend service1_backend

backend service1_backend

balance roundrobin

option httpchk GET /health

http-check expect status 200

server service1-1 service1:8080 check

server service1-2 service1-backup:8080 check backup

backend api_backend

balance roundrobin

option httpchk GET /api/health

http-check expect status 200

server api-1 api-service:3000 check

server api-2 api-service-backup:3000 check backup

# Statistics interface (optional)

listen stats

bind *:8404

stats enable

stats uri /stats

stats refresh 30s

stats admin if TRUE

SSL Configuration Validation

Test SSL configuration:

# SSL connectivity test

openssl s_client -connect your-domain.com:443 -servername your-domain.com

# Headers verification

curl -I https://your-domain.com

# SNI test

curl -H "Host: example.com" https://54984654.bunkerweb.cloud

Gateway Best Practices

- Health Checks: Configure health checks for your services

- Load Balancing: Use multiple instances for high availability

- Monitoring: Monitor your gateway metrics

- Security Headers: Add appropriate security headers

- Timeouts: Configure appropriate timeouts to avoid blocking

BunkerWeb Cloud IP Whitelist

Why Configure a Whitelist?

To further secure your infrastructure, it is recommended to configure a whitelist of BunkerWeb Cloud IP addresses on the client infrastructure side. This ensures that only traffic from BunkerWeb Cloud can reach your backend services.

We recommend whitelisting at firewall level (iptables ..etc).

BunkerWeb Cloud IP Addresses to Whitelist

List of IP addresses to allow:

Updated list is available here: https://repo.bunkerweb.io/cloud/ips

# BunkerWeb Cloud IP addresses

4.233.128.18

20.19.161.132

Whitelist Configuration by Technology

Nginx

Nginx Configuration

# In your server configuration

server {

listen 443 ssl;

server_name example.com;

# BunkerWeb Cloud IP whitelist

allow 192.168.1.0/24;

allow 10.0.0.0/16;

allow 172.16.0.0/12;

deny all;

ssl_certificate /path/to/example.com.crt;

ssl_certificate_key /path/to/example.com.key;

location / {

proxy_pass http://service1:8080;

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

}

}

# Configuration with geo module for more flexibility

geo $bunkerweb_ip {

default 0;

192.168.1.0/24 1;

10.0.0.0/16 1;

172.16.0.0/12 1;

}

server {

listen 443 ssl;

server_name example.com;

if ($bunkerweb_ip = 0) {

return 403;

}

# ... rest of configuration

}

Traefik

Traefik Configuration

# Configuration in dynamic.yml

http:

middlewares:

bunkerweb-whitelist:

ipWhiteList:

sourceRange:

- "192.168.1.0/24"

- "10.0.0.0/16"

- "172.16.0.0/12"

ipStrategy:

depth: 1

routers:

example-router:

rule: "Host(`example.com`)"

service: "example-service"

middlewares:

- "bunkerweb-whitelist"

- "security-headers"

tls:

certResolver: "myresolver"

api-router:

rule: "Host(`api.example.com`)"

service: "api-service"

middlewares:

- "bunkerweb-whitelist"

- "security-headers"

tls:

certResolver: "myresolver"

services:

service1:

image: your-app:latest

labels:

- "traefik.enable=true"

- "traefik.http.routers.service1.rule=Host(`example.com`)"

- "traefik.http.routers.service1.middlewares=bunkerweb-whitelist"

- "traefik.http.middlewares.bunkerweb-whitelist.ipwhitelist.sourcerange=192.168.1.0/24,10.0.0.0/16,172.16.0.0/12"

Apache

Apache Configuration

<VirtualHost *:443>

ServerName example.com

# BunkerWeb Cloud IP whitelist

<RequireAll>

Require ip 192.168.1.0/24

Require ip 10.0.0.0/16

Require ip 172.16.0.0/12

</RequireAll>

SSLEngine on

SSLCertificateFile /path/to/example.com.crt

SSLCertificateKeyFile /path/to/example.com.key

ProxyPass / http://service1:8080/

ProxyPassReverse / http://service1:8080/

ProxyPreserveHost On

# Configuration for denied access logs

LogFormat "%h %l %u %t \"%r\" %>s %O \"%{Referer}i\" \"%{User-Agent}i\"" combined

CustomLog logs/access.log combined

ErrorLog logs/error.log

</VirtualHost>

# Alternative configuration with mod_authz_core

<VirtualHost *:443>

ServerName api.example.com

<Directory />

<RequireAny>

Require ip 192.168.1.0/24

Require ip 10.0.0.0/16

Require ip 172.16.0.0/12

</RequireAny>

</Directory>

# ... rest of configuration

</VirtualHost>

HAProxy

HAProxy Configuration

# Configuration in haproxy.cfg

frontend bunkerweb_frontend

bind *:443 ssl crt /path/to/certificates/

# ACL for BunkerWeb Cloud whitelist

acl bunkerweb_ips src 192.168.1.0/24 10.0.0.0/16 172.16.0.0/12

# Block everything except BunkerWeb Cloud

http-request deny unless bunkerweb_ips

# Security headers

http-response set-header X-Frame-Options DENY

http-response set-header X-Content-Type-Options nosniff

# Routing

acl is_example hdr(host) -i example.com

acl is_api hdr(host) -i api.example.com

use_backend app_servers if is_example

use_backend api_servers if is_api

default_backend app_servers

backend app_servers

balance roundrobin

server app1 service1:8080 check

server app2 service2:8080 check

backend api_servers

balance roundrobin

server api1 api-service:3000 check

server api2 api-service-backup:3000 check

System Firewall (iptables)

iptables Configuration

#!/bin/bash

# iptables configuration script for BunkerWeb Cloud whitelist

# Clear existing rules

iptables -F

iptables -X

# Default policies

iptables -P INPUT DROP

iptables -P FORWARD DROP

iptables -P OUTPUT ACCEPT

# Allow loopback

iptables -A INPUT -i lo -j ACCEPT

# Allow established connections

iptables -A INPUT -m state --state ESTABLISHED,RELATED -j ACCEPT

# Whitelist BunkerWeb Cloud IPs for HTTPS

iptables -A INPUT -p tcp --dport 443 -s 192.168.1.0/24 -j ACCEPT

iptables -A INPUT -p tcp --dport 443 -s 10.0.0.0/16 -j ACCEPT

iptables -A INPUT -p tcp --dport 443 -s 172.16.0.0/12 -j ACCEPT

# Allow HTTP for Let's Encrypt (optional)

iptables -A INPUT -p tcp --dport 80 -j ACCEPT

# Allow SSH (adapt to your needs)

iptables -A INPUT -p tcp --dport 22 -j ACCEPT

# Logs for debugging

iptables -A INPUT -j LOG --log-prefix "DROPPED: "

# Save rules

iptables-save > /etc/iptables/rules.v4

echo "iptables configuration applied successfully"

Whitelist Best Practices

- Monitor Rejections: Monitor blocked access attempts

- Regular Updates: Keep the IP list up to date

- Regular Testing: Validate that the whitelist works correctly

- Documentation: Document IP changes

- Alerting: Configure alerts for BunkerWeb IP changes

- Backup: Keep a backup configuration in case of issues

REAL_IP Configuration and Client Address Recovery

Why Configure REAL_IP?

When using BunkerWeb Cloud as a reverse proxy, the IP addresses that your backend applications see are those of BunkerWeb Cloud, not those of the real clients. To retrieve the real client IP addresses, specific configuration is required.

BunkerWeb Cloud Side Configuration

In the BunkerWeb UI, configure Real IP:

USE_REAL_IP: yes # Default is no

REAL_IP_FROM: 192.168.0.0/16 172.16.0.0/12 10.0.0.0/8 # Default

REAL_IP_HEADER: X-Forwarded-For # Default

REAL_IP_RECURSIVE: yes # Default

# Example if also using Cloudflare Proxy in front of BunkerWeb

REAL_IP_FROM_URLS: https://www.cloudflare.com/ips-v4/ https://www.cloudflare.com/ips-v6/

You can find all configuration options in the Documentation Real Ip

Client Infrastructure Side Configuration

Nginx

Nginx Configuration for REAL_IP

# Configure trusted IP addresses (BunkerWeb Cloud)

set_real_ip_from 4.233.128.18/32

set_real_ip_from 20.19.161.132/32

# Header to use for retrieving real IP

real_ip_header X-Real-IP;

# Alternative with X-Forwarded-For

# real_ip_header X-Forwarded-For;

server {

listen 443 ssl http2;

server_name example.com;

# SSL configuration

ssl_certificate /path/to/example.com.crt;

ssl_certificate_key /path/to/example.com.key;

location / {

proxy_pass http://service1:8080;

# Forward real IP headers to backend

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto $scheme;

# Log with real client IP

access_log /var/log/nginx/access.log combined;

}

}

# Custom log format with real IP

log_format real_ip '$remote_addr - $remote_user [$time_local] '

'"$request" $status $body_bytes_sent '

'"$http_referer" "$http_user_agent" '

'real_ip="$realip_remote_addr"';

Apache

Apache Configuration for REAL_IP

# Load mod_remoteip module

LoadModule remoteip_module modules/mod_remoteip.so

<VirtualHost *:443>

ServerName example.com

# SSL configuration

SSLEngine on

SSLCertificateFile /path/to/example.com.crt

SSLCertificateKeyFile /path/to/example.com.key

# Configure trusted IP addresses

RemoteIPHeader X-Real-IP

RemoteIPTrustedProxy 4.233.128.18/32

RemoteIPTrustedProxy 20.19.161.132/32

# Alternative with X-Forwarded-For

# RemoteIPHeader X-Forwarded-For

# Reverse proxy configuration

ProxyPass / http://service1:8080/

ProxyPassReverse / http://service1:8080/

ProxyPreserveHost On

# Forward IP headers

ProxyPassReverse / http://service1:8080/

ProxyPassReverseInterpolateEnv On

# Logs with real IP

LogFormat "%a %l %u %t \"%r\" %>s %O \"%{Referer}i\" \"%{User-Agent}i\"" combined_real_ip

CustomLog logs/access.log combined_real_ip

ErrorLog logs/error.log

</VirtualHost>

HAProxy

HAProxy Configuration for REAL_IP

global

maxconn 4096

log stdout local0

defaults

mode http

option httplog

option dontlognull

option forwardfor

# Log format with real IP

log-format "%ci:%cp [%t] %ft %b/%s %Tq/%Tw/%Tc/%Tr/%Ta %ST %B %CC %CS %tsc %ac/%fc/%bc/%sc/%rc %sq/%bq %hr %hs %{+Q}r"

frontend https_frontend

bind *:443 ssl crt /etc/ssl/certs/

# BunkerWeb Cloud IP whitelist

acl bunkerweb_ips src 4.233.128.18/32 20.19.161.132/32

http-request deny unless bunkerweb_ips

# Capture real IP from headers

capture request header X-Real-IP len 15

capture request header X-Forwarded-For len 50

# Routing

acl is_example hdr(host) -i example.com

use_backend app_servers if is_example

default_backend app_servers

backend app_servers

balance roundrobin

# Add/preserve real IP headers

http-request set-header X-Original-Forwarded-For %[req.hdr(X-Forwarded-For)]

http-request set-header X-Client-IP %[req.hdr(X-Real-IP)]

server app1 service1:8080 check

server app2 service2:8080 check backup

Traefik

Traefik Configuration for REAL_IP

# Configuration in dynamic.yml

http:

middlewares:

real-ip:

ipWhiteList:

sourceRange:

- "4.233.128.18/32"

- "20.19.161.132/32"

ipStrategy:

depth: 2 # Number of trusted proxies

excludedIPs:

- "127.0.0.1/32"

routers:

example-router:

rule: "Host(`example.com`)"

service: "example-service"

middlewares:

- "real-ip"

tls:

certResolver: "myresolver"

services:

example-service:

loadBalancer:

servers:

- url: "http://service1:8080"

passHostHeader: true

entryPoints:

websecure:

address: ":443"

forwardedHeaders:

trustedIPs:

- "4.233.128.18/32"

- "20.19.161.132/32"

insecure: false

accessLog:

format: json

fields:

defaultMode: keep

names:

ClientUsername: drop

headers:

defaultMode: keep

names:

X-Real-IP: keep

X-Forwarded-For: keep

Testing and Validation

Configuration Verification

# Test 1: Check received headers

curl -H "X-Real-IP: 203.0.113.1" \

-H "X-Forwarded-For: 203.0.113.1, 192.168.1.100" \

https://example.com/test-ip

# Test 2: Analyze logs

tail -f /var/log/nginx/access.log | grep "203.0.113.1"

# Test 3: Test from different sources

curl -v https://example.com/whatismyip

REAL_IP Best Practices

- Security: Only trust IP headers from known sources (BunkerWeb Cloud)

- Validation: Always validate IP addresses received in headers

- Logging: Log both proxy IP and real IP for debugging

- Fallback: Always have a default value if headers are missing

- Testing: Regularly test that IP detection works correctly

- Monitoring: Monitor IP patterns to detect anomalies

REAL_IP Troubleshooting

Common Issues

- IP always shows BunkerWeb's: Check trusted proxy configuration

- Missing headers: Check BunkerWeb Cloud side configuration

- Invalid IPs: Implement strict IP validation

- Incorrect logs: Check log format and real_ip module configuration

Diagnostic Commands

Test IP detection

curl -H "X-Real-IP: 1.2.3.4" https://your-domain.com/debug-headers

Monitoring and Observability

Grafana Access

Your managed Grafana instance gives you access to:

Available Metrics

-

Traffic Overview

-

Requests per second

- HTTP status codes

- Request geolocation

-

Security

-

Blocked attack attempts

- Detected threat types

- Triggered WAF rules

-

Performance Metrics

-

Request latency

- Backend response time

- Resource utilization

Available Logs

- Access Logs: All HTTP/HTTPS requests

- Security Logs: Security events and blocks

- Error Logs: Application and system errors

Alert Configuration

Configure Grafana alerts for:

- Abnormal traffic spikes

- Increase in 5xx errors

- DDoS attack detection

- Backend health failures

Best Practices

Security

- Use HTTPS for all backend communication

- Implement an IP whitelist if possible

- Configure appropriate timeouts

- Enable compression to optimize performance

Performance

- Optimize cache configuration

- Use HTTP/2 on client side

- Configure health checks for your backends

- Monitor metrics regularly

Troubleshooting

Common Issues

1. SSL/TLS Error

Symptom: SSL certificate errors

Solutions:

# Check SNI configuration

openssl s_client -connect backend.com:443 -servername example.com

# Check backend certificates

openssl x509 -in certificate.crt -text -noout

2. Backend Timeout

Symptom: 504 Gateway Timeout errors

Solutions:

- Increase

REVERSE_PROXY_CONNECT_TIMEOUT&REVERSE_PROXY_SEND_TIMEOUT - Check backend health

- Optimize application performance

3. Routing Issues

Symptom: Wrong service being served

Solutions:

- Check

SERVER_NAMEconfiguration - Validate SNI configuration

- Check

Hostheaders

Diagnostic Commands

# Connectivity test

curl -v https://your-domain.com

# Test with custom headers

curl -H "Host: example.com" -v https://54984654.bunkerweb.cloud

# DNS verification

dig +trace example.com

# SSL test

openssl s_client -connect example.com:443 -servername example.com

Technical Support

For any technical assistance:

- Check logs in Grafana

- Verify configuration in BunkerWeb UI

- Contact support with configuration details and error logs

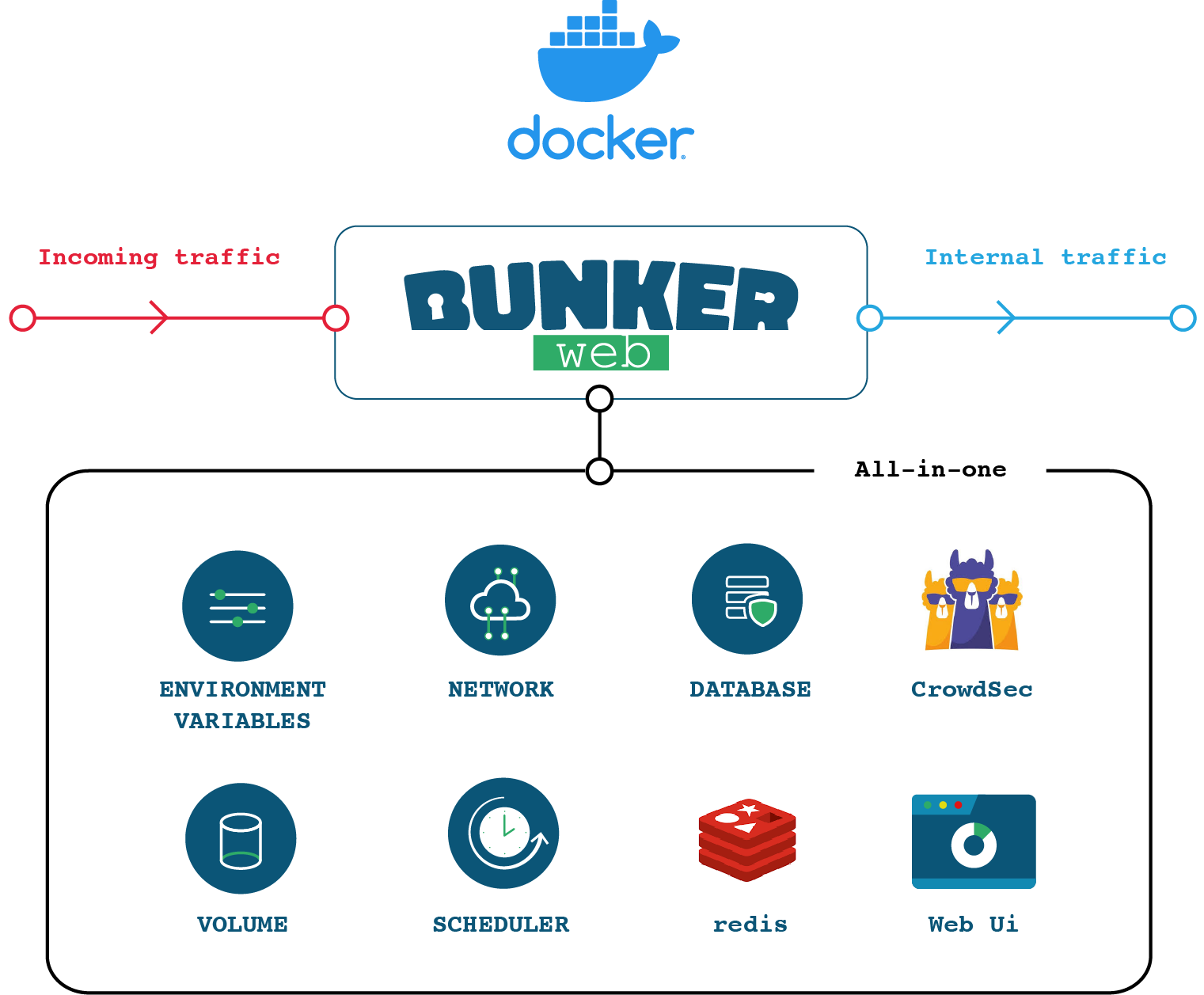

All-In-One (AIO) Image

Deployment

To deploy the all-in-one container, all you have to do is run the following command:

docker run -d \

--name bunkerweb-aio \

-v bw-storage:/data \

-p 80:8080/tcp \

-p 443:8443/tcp \

-p 443:8443/udp \

bunkerity/bunkerweb-all-in-one:1.6.11

By default, the container exposes:

- 8080/tcp for HTTP

- 8443/tcp for HTTPS

- 8443/udp for QUIC

- 7000/tcp for the web UI access without BunkerWeb in front (not recommended for production)

- 8888/tcp for the API when

SERVICE_API=yes(internal use; prefer exposing it through BunkerWeb as a reverse proxy rather than publishing directly)

The All-In-One image comes with several built-in services, which can be controlled using environment variables:

SERVICE_UI=yes(default) - Enables the web UI serviceSERVICE_SCHEDULER=yes(default) - Enables the Scheduler serviceSERVICE_API=no(default) - Disables the API service (FastAPI control plane)AUTOCONF_MODE=no(default) - Enables the autoconf serviceUSE_REDIS=yes(default) - Enables the built-in Redis instanceUSE_CROWDSEC=no(default) - CrowdSec integration is disabled by defaultHIDE_SERVICE_LOGS=(optional) - Comma-separated list of services to silence in container logs. Accepted values:api,autoconf,bunkerweb,crowdsec,redis,scheduler,ui,nginx.access,nginx.error,modsec.- Logging: The all-in-one image streams every service's stdout and stderr to the container output. Use

docker logs bunkerweb-aio(or your preferred container logging driver) to view and rotate logs — the image does not write on-disk log files for its Python services.

A named volume (or bind mount) is required to persist the SQLite database, cache, and backups stored under /data inside the container:

services:

bunkerweb-aio:

image: bunkerity/bunkerweb-all-in-one:1.6.11

container_name: bunkerweb-aio

ports:

- "80:8080/tcp"

- "443:8443/tcp"

- "443:8443/udp"

volumes:

- bw-storage:/data

...

volumes:

bw-storage:

Using a local folder for persistent data

The All-In-One container runs services as an unprivileged user with UID 101 and GID 101. This improves security: even if a component is compromised, it does not gain root (UID/GID 0) on the host.

If you bind mount a local folder, ensure the directory permissions allow that unprivileged user to write data:

mkdir bw-data && \

chown root:101 bw-data && \

chmod 770 bw-data

Or, if the folder already exists:

chown -R root:101 bw-data && \

chmod -R 770 bw-data

When using Docker in rootless mode or Podman, container UIDs/GIDs are remapped. Check your subuid/subgid ranges first:

grep ^$(whoami): /etc/subuid && \

grep ^$(whoami): /etc/subgid

For example, if the range starts at 100000, the effective UID/GID becomes 100100 (100000 + 100):

mkdir bw-data && \

sudo chgrp 100100 bw-data && \

chmod 770 bw-data

Or, if the folder already exists:

sudo chgrp -R 100100 bw-data && \

sudo chmod -R 770 bw-data

API Integration

The All-In-One image embeds the BunkerWeb API. It is disabled by default and can be enabled by setting SERVICE_API=yes.

Security

The API is a privileged control plane. Do not expose it directly to the Internet. Keep it on an internal network, restrict source IPs with API_WHITELIST_IPS, require authentication (API_TOKEN or API users + Biscuit), and preferably access it through BunkerWeb as a reverse proxy on an unguessable path.

Quick enable (standalone) — publishes the API port; for testing only:

docker run -d \

--name bunkerweb-aio \

-v bw-storage:/data \

-e SERVICE_API=yes \

-e API_WHITELIST_IPS="127.0.0.0/8" \

-e API_USERNAME=changeme \

-e API_PASSWORD=StrongP@ssw0rd \

-p 80:8080/tcp -p 443:8443/tcp -p 443:8443/udp \

-p 8888:8888/tcp \

bunkerity/bunkerweb-all-in-one:1.6.11

Recommended (behind BunkerWeb) — do not publish 8888; reverse‑proxy it instead:

services:

bunkerweb-aio:

image: bunkerity/bunkerweb-all-in-one:1.6.11

container_name: bunkerweb-aio

ports:

- "80:8080/tcp"

- "443:8443/tcp"

- "443:8443/udp"

environment:

SERVER_NAME: "api.example.com"

MULTISITE: "yes"

DISABLE_DEFAULT_SERVER: "yes"

api.example.com_USE_TEMPLATE: "bw-api"

api.example.com_USE_REVERSE_PROXY: "yes"

api.example.com_REVERSE_PROXY_URL: "/api-<unguessable>"

api.example.com_REVERSE_PROXY_HOST: "http://127.0.0.1:8888" # Internal API endpoint

# API settings

SERVICE_API: "yes"

# Set a strong token and whitelist only trusted IPs/networks (more details below)

API_USERNAME: "changeme"

API_PASSWORD: "StrongP@ssw0rd"

API_ROOT_PATH: "/api-<unguessable>" # Match REVERSE_PROXY_URL

# We deactivate the UI - change to "yes" to enable it

SERVICE_UI: "no"

volumes:

- bw-storage:/data

networks:

- bw-universe

volumes:

bw-storage:

networks:

bw-universe:

name: bw-universe

Details about authentication, permissions (ACL), rate limiting, TLS, and configuration options are available in the API documentation.

Accessing the Setup wizard

By default, the setup wizard is automagically launched when you run the AIO container for the first time. To access it, follow these steps:

- Start the AIO container as above, ensuring

SERVICE_UI=yes(default). - Access the UI via your main BunkerWeb endpoint, e.g.

https://your-domain.

Follow the next steps in the Quickstart guide to set up the Web UI.

Redis Integration

The BunkerWeb All-In-One image includes Redis out-of-the-box for the persistence of bans and reports. Keep in mind:

- The embedded Redis service only starts when

USE_REDIS=yesandREDIS_HOSTis left at its default (127.0.0.1/localhost). - It listens on the container loopback interface, so it is available to processes inside the container but not from other containers or the host.

- Override

REDIS_HOSTonly when you have an external Redis/Valkey endpoint to connect to—doing so prevents the embedded instance from launching. - To disable Redis entirely, set

USE_REDIS=no. - Config precedence (important): the embedded Redis is launched from

/var/lib/bunkerweb/redis-runtime.conf, built at boot by copying/etc/redis.confand appending env-driven defaults only for directives the conf file is silent about. A mounted custom/etc/redis.conftherefore always wins; the env vars below only fill the gaps. - Memory tuning: out-of-the-box defaults follow the Redis Best Practices —

maxmemory 256mbandmaxmemory-policy volatile-lru. Override viaREDIS_MAXMEMORYandREDIS_MAXMEMORY_POLICYwhen the conf does not pin them. - Persistence overrides:

REDIS_APPENDONLY=yes|notoggles AOF (defaultyes); RDB snapshots are configured withREDIS_SAVEplus optionalREDIS_SAVE_0,REDIS_SAVE_1, … each providing onesave <seconds> <changes>pair (e.g.REDIS_SAVE_0="900 1",REDIS_SAVE_1="300 10"). Setting any of these env vars replaces the built-in900 1 / 300 10 / 60 10000default set; an empty value emitssave "", disabling RDB. Ignored when the conf already declaressaveitself. - Authentication: when

REDIS_PASSWORDis set and the conf does not already definerequirepass, the embedded Redis is launched withrequirepassso the BunkerWeb client and server stay in sync. The embedded server only supports the default user — setREDIS_USERNAMEonly when pointing at an external Redis with ACLs. - Redis logs appear with the

[REDIS]prefix in Docker logs and in/var/log/bunkerweb/redis.log.

CrowdSec Integration

The BunkerWeb All-In-One Docker image comes with CrowdSec fully integrated—no extra containers or manual setup required. Follow the steps below to enable, configure, and extend CrowdSec in your deployment.

By default, CrowdSec is disabled. To turn it on, simply add the USE_CROWDSEC environment variable:

docker run -d \

--name bunkerweb-aio \

-v bw-storage:/data \

-e USE_CROWDSEC=yes \

-p 80:8080/tcp \

-p 443:8443/tcp \

-p 443:8443/udp \

bunkerity/bunkerweb-all-in-one:1.6.11

-

When

USE_CROWDSEC=yes, the entrypoint will:- Register and start the local CrowdSec agent (via

cscli). - Install or upgrade default collections & parsers.

- Configure the

crowdsec-bunkerweb-bouncer/v1.6bouncer.

- Register and start the local CrowdSec agent (via

Default Collections & Parsers

On first startup (or after upgrading), these assets are automatically installed and kept up to date:

| Type | Name | Purpose |

|---|---|---|

| Collection | bunkerity/bunkerweb |

Defend Nginx servers against a broad array of HTTP-based attacks, from brute-force to injection attempts. |

| Collection | crowdsecurity/appsec-virtual-patching |

Delivers a dynamically updated WAF-style rule set targeting known CVEs, automatically patched daily to shield web applications from newly discovered vulnerabilities. |

| Collection | crowdsecurity/appsec-generic-rules |

Complements crowdsecurity/appsec-virtual-patching with heuristics for generic application-layer attack patterns—such as enumeration, path traversal, and automated probes—filling gaps where CVE-specific rules don’t yet exist. |

| Parser | crowdsecurity/geoip-enrich |

Enriches events with GeoIP context |

How it works internally

The entrypoint script invokes:cscli hub update

cscli install collection bunkerity/bunkerweb

cscli install collection crowdsecurity/appsec-virtual-patching

cscli install collection crowdsecurity/appsec-generic-rules

cscli install parser crowdsecurity/geoip-enrich

Collection missing in Docker?

If cscli collections list inside the container still does not show bunkerity/bunkerweb, run docker exec -it bunkerweb-aio cscli hub update and then restart the container (docker restart bunkerweb-aio) to refresh the local hub cache.

Adding Extra Collections

Need more coverage? Define CROWDSEC_EXTRA_COLLECTIONS with a space-separated list of Hubb collections:

docker run -d \

--name bunkerweb-aio \

-v bw-storage:/data \

-e USE_CROWDSEC=yes \

-e CROWDSEC_EXTRA_COLLECTIONS="crowdsecurity/apache2 crowdsecurity/mysql" \

-p 80:8080/tcp \

-p 443:8443/tcp \

-p 443:8443/udp \

bunkerity/bunkerweb-all-in-one:1.6.11

How it works internally

The script loops through each name and installs or upgrades as needed—no manual steps required.

Disable Specific Parsers

If you want to keep the default setup but explicitly disable one or more parsers, provide a space-separated list via CROWDSEC_DISABLED_PARSERS:

docker run -d \

--name bunkerweb-aio \

-v bw-storage:/data \

-e USE_CROWDSEC=yes \

-e CROWDSEC_DISABLED_PARSERS="crowdsecurity/geoip-enrich foo/bar-parser" \

-p 80:8080/tcp \

-p 443:8443/tcp \

-p 443:8443/udp \

bunkerity/bunkerweb-all-in-one:1.6.11

Notes:

- The list is applied after required items are installed/updated; only the parsers you list are removed.

- Use hub slugs as shown by cscli parsers list (e.g., crowdsecurity/geoip-enrich).

AppSec Toggle

CrowdSec AppSec features—powered by the appsec-virtual-patching and appsec-generic-rules collections—are enabled by default.

To disable all AppSec (WAF/virtual-patching) functionality, set:

-e CROWDSEC_APPSEC_URL=""

This effectively turns off the AppSec endpoint so no rules are applied.

External CrowdSec API

If you operate a remote CrowdSec instance, point the container to your API:

docker run -d \

--name bunkerweb-aio \

-v bw-storage:/data \

-e USE_CROWDSEC=yes \

-e CROWDSEC_API="https://crowdsec.example.com:8000" \

-p 80:8080/tcp \

-p 443:8443/tcp \

-p 443:8443/udp \

bunkerity/bunkerweb-all-in-one:1.6.11

- Local registration is skipped when

CROWDSEC_APIis not127.0.0.1orlocalhost. - AppSec is disabled by default when using an external API. To enable it, set

CROWDSEC_APPSEC_URLto your desired endpoint. - Bouncer registration still occurs against the remote API.

- To reuse an existing bouncer key, supply

CROWDSEC_API_KEYwith your pre-generated token.

More options

For full coverage of all CrowdSec options (custom scenarios, logs, troubleshooting, and more), see the BunkerWeb CrowdSec plugin docs or visit the official CrowdSec website.

Docker

Using BunkerWeb as a Docker container offers a convenient and straightforward approach for testing and utilizing the solution, particularly if you are already familiar with Docker technology.

To facilitate your Docker deployment, we provide readily available prebuilt images on Docker Hub, supporting multiple architectures. These prebuilt images are optimized and prepared for use on the following architectures:

- x64 (64-bit)

- x86

- armv8 (ARM 64-bit)

- armv7 (ARM 32-bit)

By accessing these prebuilt images from Docker Hub, you can quickly pull and run BunkerWeb within your Docker environment, eliminating the need for extensive configuration or setup processes. This streamlined approach allows you to focus on leveraging the capabilities of BunkerWeb without unnecessary complexities.

Whether you're conducting tests, developing applications, or deploying BunkerWeb in production, the Docker containerization option provides flexibility and ease of use. Embracing this method empowers you to take full advantage of BunkerWeb's features while leveraging the benefits of Docker technology.

docker pull bunkerity/bunkerweb:1.6.11

Docker images are also available on GitHub packages and can be downloaded using the ghcr.io repository address:

docker pull ghcr.io/bunkerity/bunkerweb:1.6.11

Key concepts for Docker integration include:

- Environment variables: Configure BunkerWeb easily using environment variables. These variables allow you to customize various aspects of BunkerWeb's behavior, such as network settings, security options, and other parameters.

- Scheduler container: Manage configuration and execute jobs using a dedicated container called the scheduler.

- Networks: Docker networks play a vital role in the integration of BunkerWeb. These networks serve two main purposes: exposing ports to clients and connecting to upstream web services. By exposing ports, BunkerWeb can accept incoming requests from clients, allowing them to access the protected web services. Additionally, by connecting to upstream web services, BunkerWeb can efficiently route and manage traffic, providing enhanced security and performance.

Database backend

Please note that our instructions assume you are using SQLite as the default database backend, as configured by the DATABASE_URI setting. However, other database backends are also supported. See the docker-compose files in the misc/integrations folder of the repository for more information.

Environment variables

Settings are passed to the Scheduler using Docker environment variables:

...

services:

bw-scheduler:

image: bunkerity/bunkerweb-scheduler:1.6.11

environment:

- MY_SETTING=value

- ANOTHER_SETTING=another value

volumes:

- bw-storage:/data # This is used to persist the cache and other data like backups

...

Full list

For the complete list of environment variables, see the settings section of the documentation.

Skipping labelled containers

When a container should be ignored by autoconf, set DOCKER_IGNORE_LABELS on the controller. Provide a space- or comma-separated list of label keys (for example bunkerweb.SERVER_NAME) or just the suffix (SERVER_NAME). Any container or custom-config source carrying a matching label is skipped during discovery, and the label is ignored when translating settings.

Using Docker secrets

Instead of passing sensitive settings via environment variables, you can store them as Docker secrets. For each setting you want to secure, create a Docker secret with the name matching the setting key (in uppercase). BunkerWeb's entrypoint scripts automatically load secrets from /run/secrets and export them as environment variables.

Example:

# Create a Docker secret for ADMIN_PASSWORD

echo "S3cr3tP@ssw0rd" | docker secret create ADMIN_PASSWORD -

Mount the secrets when deploying:

services:

bw-ui:

secrets:

- ADMIN_PASSWORD

...

secrets:

ADMIN_PASSWORD:

external: true

This ensures sensitive settings are kept out of the environment and logs.

Scheduler

The scheduler runs in its own container, which is also available on Docker Hub:

docker pull bunkerity/bunkerweb-scheduler:1.6.11

BunkerWeb settings

Since version 1.6.0, the Scheduler container is where you define the settings for BunkerWeb. The Scheduler then pushes the configuration to the BunkerWeb container.

⚠ Important: All API-related settings (like API_HTTP_PORT, API_LISTEN_IP, API_SERVER_NAME, API_WHITELIST_IP, and API_TOKEN if you use it) must also be defined in the BunkerWeb container. The settings must be mirrored in both containers; otherwise, the BunkerWeb container will not accept API requests from the Scheduler.

x-bw-api-env: &bw-api-env

# We use an anchor to avoid repeating the same settings for both containers

API_HTTP_PORT: "5000" # Default value

API_LISTEN_IP: "0.0.0.0" # Default value

API_SERVER_NAME: "bwapi" # Default value

API_WHITELIST_IP: "127.0.0.0/24 10.20.30.0/24" # Set this according to your network settings

# Optional token; if set, Scheduler sends Authorization: Bearer <token>

API_TOKEN: ""

services:

bunkerweb:

image: bunkerity/bunkerweb:1.6.11

environment:

# This will set the API settings for the BunkerWeb container

<<: *bw-api-env

restart: "unless-stopped"

networks:

- bw-universe

bw-scheduler:

image: bunkerity/bunkerweb-scheduler:1.6.11

environment:

# This will set the API settings for the Scheduler container

<<: *bw-api-env

volumes:

- bw-storage:/data # This is used to persist the cache and other data like backups

restart: "unless-stopped"

networks:

- bw-universe

...

A volume is needed to store the SQLite database and backups used by the scheduler:

...

services:

bw-scheduler:

image: bunkerity/bunkerweb-scheduler:1.6.11

volumes:

- bw-storage:/data

...

volumes:

bw-storage:

Using a local folder for persistent data

The scheduler runs as an unprivileged user with UID 101 and GID 101 inside the container. This enhances security: in case a vulnerability is exploited, the attacker won't have full root (UID/GID 0) privileges.

However, if you use a local folder for persistent data, you must set the correct permissions so the unprivileged user can write data to it. For example:

mkdir bw-data && \

chown root:101 bw-data && \

chmod 770 bw-data

Alternatively, if the folder already exists:

chown -R root:101 bw-data && \

chmod -R 770 bw-data

If you are using Docker in rootless mode or Podman, UIDs and GIDs in the container will be mapped to different ones on the host. You will first need to check your initial subuid and subgid:

grep ^$(whoami): /etc/subuid && \

grep ^$(whoami): /etc/subgid

For example, if you have a value of 100000, the mapped UID/GID will be 100100 (100000 + 100):

mkdir bw-data && \

sudo chgrp 100100 bw-data && \

chmod 770 bw-data

Or if the folder already exists:

sudo chgrp -R 100100 bw-data && \

sudo chmod -R 770 bw-data

Scheduler container settings

The scheduler is the control-plane worker that reads settings, renders configs, and pushes them to BunkerWeb instances. Settings are centralized here with defaults and accepted values.

Configuration sources and precedence

- Environment variables (including Docker/Compose

environment:) - Secrets in

/run/secrets/<VAR>(auto-loaded by the entrypoint) - Built-in defaults

Configuration reference (power users)

Runtime & safety

| Setting | Description | Accepted values | Default |

|---|---|---|---|

HEALTHCHECK_INTERVAL |

Seconds between scheduler health checks | Integer seconds | 30 |

RELOAD_MIN_TIMEOUT |

Minimum seconds between successive reloads | Integer seconds | 5 |

DISABLE_CONFIGURATION_TESTING |

Skip config tests before applying | yes or no |

no |

IGNORE_FAIL_SENDING_CONFIG |

Proceed even if some instances fail to receive a config | yes or no |

no |

IGNORE_REGEX_CHECK |

Skip regex validation for settings (shared with autoconf) | yes or no |

no |

TZ |

Time zone for scheduler logs, cron-like jobs, backups, and timestamps | TZ database name (e.g., UTC, Europe/Paris) |

unset (container default, usually UTC) |

Database

| Setting | Description | Accepted values | Default |

|---|---|---|---|

DATABASE_URI |

Primary database DSN (shared with autoconf and instances) | SQLAlchemy DSN | sqlite:////var/lib/bunkerweb/db.sqlite3 |

DATABASE_URI_READONLY |

Optional read-only DSN; scheduler drops to read-only mode if this is all that works | SQLAlchemy DSN or empty | unset |

Logging

| Setting | Description | Accepted values | Default |

|---|---|---|---|

LOG_LEVEL, CUSTOM_LOG_LEVEL |

Base/override log level | debug, info, warning, error, critical |

info |

LOG_TYPES |

Destinations | Space-separated stderr/file/syslog |

stderr |

SCHEDULER_LOG_TO_FILE |

Legacy convenience: when set, LOG_FILE_PATH defaults to /var/log/bunkerweb/scheduler.log if LOG_TYPES includes file and you didn't set LOG_FILE_PATH explicitly. |

yes/no |

no |

LOG_FILE_PATH |

Custom log file path (used when LOG_TYPES includes file) |

File path | /var/log/bunkerweb/scheduler.log when LOG_TYPES contains file, else unset |

LOG_SYSLOG_ADDRESS |

Syslog target (udp://host:514, tcp://host:514, or socket path) |

Host:port, proto-prefixed host, or socket path | unset |

LOG_SYSLOG_TAG |

Syslog ident/tag | String | bw-scheduler |

UI container settings

The UI container also honors TZ to localize logs and scheduled maintenance tasks (such as cleanup jobs initiated by the UI).

| Setting | Description | Accepted values | Default |

|---|---|---|---|

TZ |

Time zone for UI logs and scheduled actions | TZ database name (e.g., UTC, Europe/Paris) |

unset (container default, usually UTC) |

Logging

| Setting | Description | Accepted values | Default |

|---|---|---|---|

LOG_LEVEL, CUSTOM_LOG_LEVEL |

Base log level / override | debug, info, warning, error, critical |

info |

LOG_TYPES |

Destinations | Space-separated stderr/file/syslog |

stderr |

LOG_FILE_PATH |

Path for file logging (used when LOG_TYPES includes file or CAPTURE_OUTPUT=yes) |

File path | /var/log/bunkerweb/ui.log when file/capture enabled, else unset |

LOG_SYSLOG_ADDRESS |

Syslog target (udp://host:514, tcp://host:514, socket) |

Host:port, proto-prefixed host, or path | unset |

LOG_SYSLOG_TAG |

Syslog ident/tag | String | bw-ui |

CAPTURE_OUTPUT |

Send Gunicorn stdout/stderr to the configured log outputs | yes or no |

no |

Networks

By default, the BunkerWeb container listens (inside the container) on 8080/tcp for HTTP, 8443/tcp for HTTPS, and 8443/udp for QUIC.

Privileged ports in rootless mode or when using Podman

If you are using Docker in rootless mode and want to redirect privileged ports (< 1024) like 80 and 443 to BunkerWeb, please refer to the prerequisites here.

If you are using Podman, you can lower the minimum number for unprivileged ports:

sudo sysctl net.ipv4.ip_unprivileged_port_start=1

The typical BunkerWeb stack when using Docker integration contains the following containers:

- BunkerWeb

- Scheduler

- Your services

For defense-in-depth purposes, we strongly recommend creating at least three different Docker networks:

bw-services: for BunkerWeb and your web servicesbw-universe: for BunkerWeb and the schedulerbw-db: for the database (if you are using one)

To secure communication between the scheduler and the BunkerWeb API, authorize API calls. Use the API_WHITELIST_IP setting to specify allowed IP addresses and subnets. For stronger protection, set API_TOKEN in both containers; the scheduler will automatically include Authorization: Bearer <token>.

It is strongly recommended to use a static subnet for the bw-universe network to enhance security. By implementing these measures, you can ensure that only authorized sources can access the BunkerWeb API, reducing the risk of unauthorized access or malicious activities:

x-bw-api-env: &bw-api-env

# We use an anchor to avoid repeating the same settings for both containers

API_WHITELIST_IP: "127.0.0.0/24 10.20.30.0/24"

API_TOKEN: "" # Optional API token

# Optional API token for authenticated API access

API_TOKEN: ""

services:

bunkerweb:

image: bunkerity/bunkerweb:1.6.11

ports:

- "80:8080/tcp"

- "443:8443/tcp"

- "443:8443/udp" # QUIC

environment:

<<: *bw-api-env

restart: "unless-stopped"

networks:

- bw-services

- bw-universe

...

bw-scheduler:

image: bunkerity/bunkerweb-scheduler:1.6.11

environment:

<<: *bw-api-env

BUNKERWEB_INSTANCES: "bunkerweb" # This setting is mandatory to specify the BunkerWeb instance

volumes:

- bw-storage:/data # This is used to persist the cache and other data like backups

restart: "unless-stopped"

networks:

- bw-universe

...

volumes:

bw-storage:

networks:

bw-universe:

name: bw-universe

ipam:

driver: default

config:

- subnet: 10.20.30.0/24 # Static subnet so only authorized sources can access the BunkerWeb API

bw-services:

name: bw-services

Full compose file

x-bw-api-env: &bw-api-env

# We use an anchor to avoid repeating the same settings for both containers

API_WHITELIST_IP: "127.0.0.0/24 10.20.30.0/24"

services:

bunkerweb:

image: bunkerity/bunkerweb:1.6.11

ports:

- "80:8080/tcp"

- "443:8443/tcp"

- "443:8443/udp" # QUIC

environment:

<<: *bw-api-env

restart: "unless-stopped"

networks:

- bw-universe

- bw-services

bw-scheduler:

image: bunkerity/bunkerweb-scheduler:1.6.11

depends_on:

- bunkerweb

environment:

<<: *bw-api-env

BUNKERWEB_INSTANCES: "bunkerweb" # This setting is mandatory to specify the BunkerWeb instance

SERVER_NAME: "www.example.com"

volumes:

- bw-storage:/data # This is used to persist the cache and other data like backups

restart: "unless-stopped"

networks:

- bw-universe

volumes:

bw-storage:

networks:

bw-universe:

name: bw-universe

ipam:

driver: default

config:

- subnet: 10.20.30.0/24 # Static subnet so only authorized sources can access the BunkerWeb API

bw-services:

name: bw-services

Build from source

Alternatively, if you prefer a more hands-on approach, you have the option to build the Docker image directly from the source. Building the image from source gives you greater control and customization over the deployment process. However, please note that this method may take some time to complete, depending on your hardware configuration (you can take a coffee ☕ if needed).

git clone https://github.com/bunkerity/bunkerweb.git && \

cd bunkerweb && \

docker build -t bw -f src/bw/Dockerfile . && \

docker build -t bw-scheduler -f src/scheduler/Dockerfile . && \

docker build -t bw-autoconf -f src/autoconf/Dockerfile . && \

docker build -t bw-ui -f src/ui/Dockerfile .

Linux

Supported Linux distributions for BunkerWeb (amd64/x86_64 and arm64/aarch64 architectures) include:

- Debian 12 "Bookworm"

- Debian 13 "Trixie"

- Ubuntu 22.04 "Jammy"

- Ubuntu 24.04 "Noble"

- Fedora 42, 43 and 44

- Red Hat Enterprise Linux (RHEL) 8, 9 and 10

Easy installation script

For a simplified installation experience, BunkerWeb provides an easy install script that automatically handles the entire setup process, including NGINX installation, repository configuration, and service setup.

Quick start

To get started, download the installation script and its checksum, then verify the script's integrity before running it.

# Download the script and its checksum

curl -fsSL -O https://github.com/bunkerity/bunkerweb/releases/download/v1.6.11/install-bunkerweb.sh

curl -fsSL -O https://github.com/bunkerity/bunkerweb/releases/download/v1.6.11/install-bunkerweb.sh.sha256

# Verify the checksum

sha256sum -c install-bunkerweb.sh.sha256

# If the check is successful, run the script

chmod +x install-bunkerweb.sh

sudo ./install-bunkerweb.sh

Security Notice

Always verify the integrity of the installation script before running it.

Download the checksum file and use a tool like sha256sum to confirm the script has not been altered or tampered with.

If the checksum verification fails, do not execute the script—it may be unsafe.

How It Works

The easy install script is a powerful tool designed to streamline the setup of BunkerWeb on a fresh Linux system. It automates the following key steps:

- System Analysis: Detects your operating system and verifies it against the list of supported distributions.

- Installation Customization: In interactive mode, it prompts you to choose an installation type (Full Stack, Manager, Worker, etc.) and decide whether to enable the web-based setup wizard when the selected mode supports it.

- Optional Integrations: Offers to automatically install and configure the CrowdSec Security Engine and Redis/Valkey when they are compatible with the selected installation type.

- Dependency Management: Installs the correct version of NGINX required by BunkerWeb from official sources and locks the version to prevent unintended upgrades.

- BunkerWeb Installation: Adds the BunkerWeb package repository, installs the necessary packages, and locks the version.

- Service Configuration: Sets up and enables the

systemdservices corresponding to your chosen installation type. - Post-install Guidance: Provides clear next steps to help you get started with your new BunkerWeb instance.

Interactive Installation

When run without any options, the script enters an interactive mode that guides you through the setup process. The interactive flow uses an inline TUI — arrow-key menus with a ❯ cursor and masked password fields — provided by gum (Charmbracelet, MIT, ~5 MB static binary).

gum is fetched ephemerally on first interactive run

The installer fetches gum the first time it needs an interactive prompt and runs it from a tempdir for the duration of the script — nothing is installed system-wide:

- Downloads the official

gum_${VERSION}_${ARCH}.tar.gzfrom the GitHub release over HTTPS (TLS 1.2+, refuses HTTP redirects, connect-timeout 10 s / total-timeout 30 s). - Verifies the tarball against a SHA256 pinned in this script (the local trust anchor — both the script's own checksum and the gum binary must match).

- If

cosignis installed: also verifies the upstreamchecksums.txtagainst Charm's GitHub-Actions OIDC identity (https://github.com/charmbracelet/gum/...) as defense-in-depth, and cross-checks that the pinned hash is the value Charm published for this exact tarball. - Extracts the binary into an exec-capable tempdir (

/var/tmp/bw-gum.XXXXXXby default;/tmp,$XDG_RUNTIME_DIR, or$HOME/.cachewhen/var/tmpis mountednoexec). - Adds the tempdir to

PATHfor the rest of the run and removes it on script exit (via anEXITtrap, even onset -efailures or signals).

What stays on disk after the installer exits: nothing. No /etc/apt/sources.list.d/charm.list, no GPG key in apt/rpm, no gum binary in /usr/bin//usr/local/bin, no package-db entry. The installer never registers a third-party apt or dnf source.

If gum cannot be downloaded — air-gapped host, network failure, SHA256 mismatch — the installer uses any whiptail already present on the system (commonly preinstalled on Debian/Ubuntu cloud images via the newt package). If neither gum nor whiptail is available, it falls back to plain text prompts.

Pass --no-tui (or set BW_INSTALL_TUI=no) to skip every TUI tier, or --tui to abort if no TUI tier can render. Air-gapped installs: pass --no-tui together with --yes and the relevant --* flags / *_INPUT env vars; no network call is made for the TUI layer.

If the installer is piped (curl … | bash) or stdin is otherwise not a TTY, it exits with a clear error rather than falling through every default — use --yes together with the appropriate --* flags for non-interactive installs in that case.

You will be asked to make the following choices:

- Installation Type: Select the components you want to install.

- Full Stack (default): An all-in-one installation including BunkerWeb, the Scheduler, and the Web UI.

- Manager: Installs the Scheduler and Web UI, intended to manage one or more remote BunkerWeb workers. Can optionally include the API service.

- Worker: Installs only the BunkerWeb instance, which can be managed by a remote Manager.

- Scheduler Only: Installs only the Scheduler component.

- Web UI Only: Installs only the Web UI component.

- API Only: Installs only the API service for programmatic access.

- Setup Wizard: Choose whether to enable the web-based configuration wizard when the selected mode includes the Web UI and supports the wizard. Manager mode always disables the wizard.

- CrowdSec Integration: Opt-in to install the CrowdSec security engine for advanced, real-time threat protection. The interactive prompt is shown for Full Stack installations only; CLI flags can also enable CrowdSec for Manager installations.

- CrowdSec AppSec: If you choose to install CrowdSec, you can also enable the Application Security (AppSec) component, which adds WAF capabilities.

- Redis/Valkey Integration: Enable Redis/Valkey to share session data, metrics, and security data across nodes for seamless clustering and load balancing. You can install locally or point to an existing server. Available for Full Stack and Manager installations only.

- Database: For Full Stack and Manager installations, choose SQLite, a local MariaDB/PostgreSQL install, or an existing external database.

- Web UI Admin User: For UI-bearing installations, choose whether to pre-create the first admin user. The installer defaults to creating one when the wizard is disabled.

- DNS Resolvers: For Full Stack, Manager, and Worker installations, you can optionally specify custom DNS resolver IPs.

- Internal API HTTPS: For Full Stack, Manager, and Worker installations, choose whether to enable HTTPS for internal API communication between the scheduler/manager and BunkerWeb/worker instances (default: HTTP only).

- API Service: For Full Stack and Manager installations, choose whether to enable the optional external API service. It is disabled by default on Linux installations.

Manager and Scheduler installations

If you choose the Manager or Scheduler Only installation type, you will also be prompted for the IP addresses or hostnames of your BunkerWeb worker instances. This list is optional during install; if you leave it empty, the installer warns and you can add workers later.

Command-Line Options

For non-interactive or automated setups, the script can be controlled with command-line flags:

General Options:

| Option | Description |

|---|---|

-v, --version VERSION |

Specifies the BunkerWeb version to install (e.g., 1.6.11). |

-w, --enable-wizard |

Enables the setup wizard. |

-n, --no-wizard |

Disables the setup wizard. |

-y, --yes |

Runs in non-interactive mode using default answers for all prompts. |

--tui |

Require a TUI (downloaded gum or existing whiptail) and abort if no TUI tier can render. |

--no-tui |

Disable all TUI tiers and use the legacy plain-text prompts. Equivalent to BW_INSTALL_TUI=no. |

-f, --force |

Forces the installation to proceed even on an unsupported OS version. |

--force-type-change |

Allow --<type> to differ from the detected install type on upgrade (intentional HA migrations only). |

-q, --quiet |

Silent installation (suppress output; implies --yes). |

--api, --enable-api |

Enables the API (FastAPI) systemd service (disabled by default). |

--no-api |

Explicitly disables the API service. |

--server-ip IP |

IP printed in post-install URLs. Overrides auto-detection and can also be set with SERVER_IP_INPUT. |

--epel |

Install epel-release on RHEL-family distributions if it is missing. |

--no-epel |

Do not install epel-release on RHEL-family distributions. |

-h, --help |

Displays the help message with all available options. |

--dry-run |

Show what would be installed without doing it. |

Installation Types:

| Option | Description |

|---|---|

--full |

Full stack installation (BunkerWeb, Scheduler, UI). This is the default. |

--manager |

Installs the Scheduler and UI to manage remote workers. |

--worker |

Installs only the BunkerWeb instance. |

--scheduler-only |

Installs only the Scheduler component. |

--ui-only |

Installs only the Web UI component. |

--api-only |

Installs only the API service (port 8888). |

Security Integrations:

| Option | Description |

|---|---|

--crowdsec |

Install and configure CrowdSec security engine. |

--no-crowdsec |

Skip CrowdSec installation. |

--crowdsec-appsec |

Install CrowdSec with AppSec component (includes WAF capabilities). |

--redis |

Install and configure Redis locally. |

--no-redis |

Skip Redis integration. |

--redis-flavor FLAVOR |

Local install flavor: redis (default) or valkey. |

Advanced Options:

| Option | Description |

|---|---|

--instances "IP1 IP2" |

Space-separated list of BunkerWeb instances (optional for manager/scheduler modes; you can add workers later). |

--manager-ip IPs |

Manager/Scheduler IPs to whitelist (required for worker in non-interactive mode). |

--dns-resolvers "IP1 IP2" |

Custom DNS resolver IPs (for full, manager, or worker installations). |

--api-https |

Enable HTTPS for internal API communication (default: HTTP only). |

--backup-dir PATH |

Directory to store automatic backup before upgrade. |

--no-auto-backup |

Skip automatic backup (you MUST have done it manually). |

--redis-host HOST |

Redis host for an existing Redis/Valkey server. |

--redis-port PORT |

Redis port for an existing Redis/Valkey server. |

--redis-database DB |

Redis database number. |

--redis-username USER |

Redis username (Redis 6+). |

--redis-password PASS |

Redis password. |

--redis-bind IP |

Redis/Valkey bind address for a local Manager install (default prompt: 0.0.0.0). |

--redis-no-password |

Skip the auto-generated requirepass when binding Redis/Valkey beyond loopback. |

--redis-maxmemory MB |

Memory cap in MB; 0 or unlimited keeps the distribution default. |

--redis-maxmemory-policy POLICY |

Eviction policy for local Redis/Valkey (default: volatile-lru). |

--redis-ssl |

Enable SSL/TLS for Redis connection. |

--redis-no-ssl |

Disable SSL/TLS for Redis connection. |

--redis-ssl-verify |

Verify Redis SSL certificate. |

--redis-no-ssl-verify |

Do not verify Redis SSL certificate. |

Database Options (--full / --manager only):

| Option | Description |

|---|---|

--database ENGINE |

Strategy: mariadb or postgresql (auto-install locally), external (use an existing remote DB), none (SQLite). |

--db-engine ENGINE |

External-DB engine: mariadb, mysql, or postgresql. Implies --database external when set on its own. |

--db-host HOST |

External DB host (FQDN or IP). |

--db-port PORT |

External DB TCP port (defaults: 3306 for mariadb/mysql, 5432 for postgresql). |

--db-name NAME |

Database name (default: bw_db). |

--db-user USER |

Database user (default: bunkerweb). |

--db-password PASS |

Database password — required for --database external. Rules: 8+ chars, no quotes/backslash/backtick. |

--db-ssl |

Use SSL/TLS for the external DB connection. |

--db-no-ssl |

Do not use SSL/TLS for the external DB connection. |

--db-ssl-verify |

Verify the external DB server certificate. |

--db-no-ssl-verify |

Use SSL but skip certificate verification. |

--db-skip-probe |

Do not probe external DB connectivity from this host. Useful when the engine client is not installed locally, or when the DB is only reachable from the scheduler's network segment. |

Web UI Admin User (--full / --manager / --ui-only only):

When no UI admin flag is provided, the interactive installer offers a Web UI admin-user prompt for UI-bearing install types. The default answer flips based on wizard state: Yes when the wizard is disabled (manager mode always; other modes when --no-wizard is passed) because otherwise the UI has no initial login. No when the wizard is enabled because the wizard collects the admin user on first boot. Operators can still opt in to pre-create the admin even with the wizard enabled, which skips the wizard's admin step.

| Option | Description |

|---|---|

--ui-admin-user NAME |

Pre-create the first Web UI admin user with this name (skips the setup wizard for the admin step). |

--ui-admin-password PASS |

Password for the admin user. Implies admin creation; the username defaults to admin if omitted. Auto-generated when omitted. Rules: 8+ chars, lower/upper/digit/special. |

--no-ui-admin |

Skip the admin-user creation prompt entirely. If the wizard is disabled, the UI remains without an initial login until credentials are configured another way. |

--ui-https-selfsigned |

(--manager only) Generate a self-signed cert and enable HTTPS on the Web UI listener. |

--no-ui-https-selfsigned |

(--manager only) Disable manager UI self-signed HTTPS. |

External database notes

--database externalrequires--db-engine,--db-host, and--db-passwordin non-interactive mode.--db-nameand--db-userhave defaults.- For production, always pair

--db-sslwith--db-ssl-verify.--db-no-ssl-verifyaccepts unauthenticated certificates and leaves the channel open to active MitM. - The installer probes connectivity once the DSN is built. If the engine client (

mariadb/mysql/psql) is not installed locally, it warns and skips the probe. If the probe runs and fails, interactive mode asks whether to write the DSN anyway; non-interactive mode aborts unless--db-skip-probeis set. - Replace

YourStrongDbPassword/YourStrongUiPassw0rd!in the examples below with values from a secrets manager before running the command.

Example Usage:

# Run in interactive mode (recommended for most users)

sudo ./install-bunkerweb.sh

# Non-interactive installation with defaults (full stack, wizard enabled)

sudo ./install-bunkerweb.sh --yes

# Install a Worker node interactively without the setup wizard

sudo ./install-bunkerweb.sh --worker --no-wizard

# Install a specific version

sudo ./install-bunkerweb.sh --version 1.6.11

# Manager setup with remote worker instances (optional at install time)

sudo ./install-bunkerweb.sh --manager --instances "192.168.1.10 192.168.1.11"

# Manager with HTTPS internal API communication

sudo ./install-bunkerweb.sh --manager --instances "192.168.1.10 192.168.1.11" --api-https

# Worker with custom DNS resolvers and HTTPS internal API

sudo ./install-bunkerweb.sh --worker --dns-resolvers "1.1.1.1 1.0.0.1" --api-https

# Full installation with CrowdSec and AppSec

sudo ./install-bunkerweb.sh --crowdsec-appsec

# Manager installation with CrowdSec enabled from the CLI

sudo ./install-bunkerweb.sh --manager --crowdsec

# Full installation using an existing Redis server

sudo ./install-bunkerweb.sh --redis-host redis.example.com --redis-password "your-strong-password"

# Full installation against an existing external MariaDB

sudo ./install-bunkerweb.sh --yes --no-wizard \

--database external --db-engine mariadb \

--db-host mariadb.example.com --db-port 3306 \

--db-name bw_db --db-user bunkerweb --db-password 'YourStrongDbPassword' \

--db-ssl --db-ssl-verify \

--ui-admin-user admin --ui-admin-password 'YourStrongUiPassw0rd!'

# Full installation against an existing external PostgreSQL

sudo ./install-bunkerweb.sh --yes --no-wizard \

--database external --db-engine postgresql \

--db-host pg.example.com --db-port 5432 \

--db-name bw_db --db-user bunkerweb --db-password 'YourStrongDbPassword' \

--ui-admin-user admin --ui-admin-password 'YourStrongUiPassw0rd!'

# Pre-create the admin user on a full install (random password printed at the end)

sudo ./install-bunkerweb.sh --no-wizard --ui-admin-user admin

# Silent non-interactive installation

sudo ./install-bunkerweb.sh --quiet --yes

# Preview installation without executing

sudo ./install-bunkerweb.sh --dry-run

# Enable the API during easy install (non-interactive)

sudo ./install-bunkerweb.sh --yes --api